The sovereignty trap

The Pentagon wants unlimited access to the most powerful AI systems. Anthropic said no. The question that remains is whether democracy can survive either answer.

Foreign Affairs Newsletter

Written by Luca Salvemini

No. 151 - March 1, 2026

The capture of El Mencho, the most powerful Mexican drug lord, closes an era. But the scene tells something larger: Washington tightening its grip on the Western hemisphere, determined to seal the southern border, block fentanyl, stop migrants. Mexico at the center, as always.

It is a country with a unique position in the world. It shares over 3,000 kilometers of border with the hegemon. It holds the largest emigrant community in the United States — millions of Spanish-speaking people, viscerally attached to their homeland and attuned to the rhetoric of President Claudia Sheinbaum. It has a commercial and industrial interconnection with Washington that few other countries in the world can match.

It is, in short, a knife pressed against the star-spangled underbelly. And it knows it.

This is why I have spent weeks studying Mexico: its history, its geography, its trajectory. The work will take shape in the first issue of Strategic Atlas, the new monthly column reserved for subscribers. It arrives on March 23rd.

Details on how to subscribe are at the bottom of this email.

The news emerging from Iran is nothing short of extraordinary and historically consequential.

I am seeking to cover it in my column First Light Brief, and this coming Sunday I will devote a full issue to the dramatic turn of events unfolding in these hours, including the joint attack by Israel and the United States and the reported death of Supreme Leader Ali Khamenei.

Today, however, I intend to turn to another issue of immense significance that has surfaced in recent days, and I would like to begin from the following statement.

“Disagreeing with the government is the most American thing in the world.”

This is the closing line of the interview — worth listening to — that Dario Amodei, founder and CEO of Anthropic, one of the leading artificial intelligence companies, gave to CBS following his recent confrontation with the Pentagon and the American administration.

For those still unfamiliar with it, Anthropic is a company founded by a group of former OpenAI employees, beginning with the Italian-born siblings Dario and Daniela Amodei.

From the outset, it distinguished itself within the American big tech landscape through a positioning that reflects the dilemmas of the age of political capitalism.

Anthropic was the first AI company to sign contracts worth hundreds of millions of dollars with the Pentagon for the management of classified documents tied to national security. Its systems are now used by numerous US military contractors, integrated directly into their services or deployed to develop them.

As Alessandro Aresu has noted, Amodei places himself in direct opposition to the techno-political current represented by Jensen Huang’s stance on China. He insists on the need to maintain and strengthen export controls — identifying his company’s interests with American interests — in contrast to the equally self-interested dovish position of the NVIDIA chief.

The rivalry has generated sharp polemics, though it has not prevented the two from doing business together.

At the same time, Anthropic confronts a crucial dilemma of the national security era.

It claims to preserve, more than OpenAI, the mission of an artificial intelligence that is not entirely subordinated to commercial and political constraints, but rather “constitutional” in nature.

This dilemma connects to a broader thesis that has gained traction in the United States in response to the controversy between Google and the deep state over Project Maven: the thesis of a new military-industrial complex, embodied by companies such as Palantir and Anduril, among others.

It is within this dynamic that the recent and bitter dispute between Secretary of Defense Pete Hegseth and Dario Amodei must be understood.

In brief: Hegseth wants to integrate the company’s AI systems into military defense programs without restrictions.

Anthropic has instead demanded guarantees and safeguards on their use, imposing two red lines — a prohibition on using its systems to develop fully autonomous weapons, and a prohibition on building and implementing mass surveillance programs.

The Pentagon’s pressure over the past two weeks has become suffocating. Hegseth issued Anthropic an ultimatum, demanding a definitive answer by 5:01 PM on Friday, February 27th.

Anthropic, through its founder, chose not to yield.

It published a statement on its website declaring that it would not grant access to its artificial intelligence systems, given the absence of any guarantee that they would not be used for mass surveillance or for the development of weapons entirely beyond human control.

In the statement, Amodei addresses several decisive points in the debate on artificial intelligence: the guardrails that must be established, and how these must necessarily derive from legislation that today, moving too slowly and paralyzed by competing vetoes, cannot keep pace with technological advancement.

“The use of these systems for domestic mass surveillance is incompatible with democratic values.

To the extent that such surveillance is currently legal, that is only because the law has not yet kept pace with the rapidly growing capabilities of AI.”

Under existing legislation, the government may purchase from public sources detailed records of the movements, web browsing, and associations of American citizens without obtaining a warrant.

A powerful AI makes it possible to assemble these scattered, individually innocuous data points into a comprehensive picture of any person’s life, automatically and at massive scale.

Amodei’s statement also reaffirms that “today’s frontier AI systems are simply not reliable enough to power fully autonomous weapons.”

The Pentagon’s reaction has been severe and unprecedented.

All of Anthropic’s contracts with federal government agencies have been revoked, and the company has been classified as a “supply chain risk” — a designation previously reserved exclusively for foreign entities, predominantly Russian and Chinese, and never before applied to an American company.

At the same time, in a troubling display of contradictory logic, Hegseth announced he is considering invoking the Defense Production Act of 1950 to compel the company’s operations on grounds of national defense and security.

A company is first punished, stripped of all federal contracts and branded a risky supplier, then subjected to coercion on the grounds that it is an essential provider for national security.

A complete hysteria.

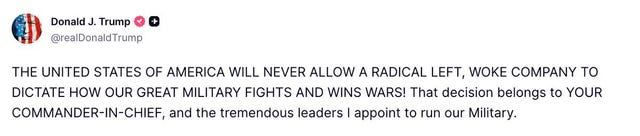

Meanwhile, on Truth Social, Trump labeled the company “far-left and woke” and called its refusal to comply a “disastrous mistake.”

The central point is that Anthropic is not, by any reasonable measure, a company of radical leftists.

It holds a contract worth approximately 200 million dollars with the Department of Defense and is currently — to the extent that is publicly known — the foremost frontier model developer to have supplied its technology, including the Claude model, to Washington’s classified and secret digital networks, contributing capabilities in simulation, operational planning, and offensive cyber operations.

This role was invoked in connection with the raid that led to the capture of Maduro.

Here lies the paradox of national security: it is no longer a question of technological capabilities, but of who commands those capabilities.

The Anthropic case, still unfolding, illuminates once more the tensions between technology, commercial development, and national security that will continue to define the age of artificial intelligence.

In an era of intensifying geopolitical competition, Silicon Valley faces difficult choices, far removed from the optimism that accompanied the rise of social networks and the promise of global human interconnection.

Technology is becoming an instrument of military power and is steadily ceasing to function as a medium of disintermediation and neutrality.

The Big Tech companies, through the pervasiveness of AI, have acquired a new power of mediation: in the realm of knowledge and in the realm of defense alike.

On the opposing side from Anthropic stand companies such as X, Palantir Technologies, Anduril Industries, and OpenAI, selected precisely as the replacement provider.

These companies display more explicit political support for the Trump administration, accompanied by a broad and substantially unconditional willingness to deploy their artificial intelligence systems within the operations of the American military.

The most emblematic case is Palantir. Its Gotham software enables the analysis and cross-referencing of vast heterogeneous datasets, up to the construction of high-precision individual profiles.

ICE paid the company 30 million dollars for “ImmigrationOS,” a system designed to monitor undocumented immigrants and select individuals for deportation.

The criticisms directed at Palantir concern the absence of transparency and the risk that predictive tools, built on big data and artificial intelligence, end up flagging individuals on the basis of ethnic or religious characteristics.

In recent months, the company has deepened its relationships with the Pentagon, the Department of Homeland Security, the Social Security Administration, and the Internal Revenue Service, all of which will use its Foundry platform to optimize their processes.

It has also secured a ten-billion-dollar, decade-long contract with the United States Army.

CEO Alex Karp claims a “consistently pro-Western vision” and the defense of a Western way of life he considers superior.

It is a declaration that clarifies the ideological framework: technology and national security as a single, unified horizon.

On one side, then, a model emerges in which technological advancement is entirely functional to the consolidation of state power. In exchange for a laissez-faire regulatory environment, companies develop increasingly powerful systems destined to flow without barriers into the public arsenal. The consequence is transparent: in a loosened normative context, the state asserts the role of the primary user of AI.

Technologies cease to be the exclusive patrimony of the company that developed them and become instruments permanently embedded in the national security apparatus.

On the other side stand Dario Amodei and Anthropic, and with them a sensibility closely aligned with the European approach: artificial intelligence must remain under human governance, subject to limits and safeguards, and must not be automatically available for military or coercive applications.

The theoretical crux emerges here: is a power truly sovereign if a private company can restrict access to a technology that may prove decisive on the military plane?

The Pentagon answers in the negative.

As Mike Froman of the Council on Foreign Relations observes, the dispute arises within a market economy, where companies are free to determine the conditions under which they supply their products.

But it unfolds equally within a constitutional republic, in which the state holds, by democratic mandate, the monopoly on the use of force.

Even if the Anthropic case were to reach a resolution, the structural question remains: must private companies limit themselves to producing reliable tools, leaving the government full discretionary power over their use, or can they shape the very definition of legitimate applications?

The paradox is this: when a technology becomes strategic, it ceases to be a market product and is absorbed into the sphere of sovereignty. It no longer belongs to Silicon Valley. It belongs to the state.

The question, however, does not exhaust itself in the state’s claim to unrestricted access.

If sovereignty implies the capacity to deploy the most powerful models without constraint, who exercises control over that power?

A democracy rests on the legitimate monopoly of force, and equally on its limitation. If artificial intelligence becomes a potentially unlimited multiplier of coercive capacity, the boundary between security and domination thins until it becomes opaque.

The problem, then, is not only whether the United States can call itself sovereign without full access to the most advanced models.

It is whether citizens can continue to call themselves sovereign when those same models are deployed without effective counterweights in the name of their protection.

This is where sovereignty, in the age of artificial intelligence, risks inverting into its own opposite.

of every tech thats ever rolled out, If there a single poster child for the desirability of it rolling out in a legal/regulatory variable and policy variable environment, AI may very well be it

there is contradiction in the essay, you write "But it unfolds equally within a constitutional republic, in which the state holds, by democratic mandate.... is absorbed into the sphere of sovereignty. It no longer belongs to Silicon Valley. It belongs to the state." But that is not a democratic conceptualization of sovereignty, the collective citizens hold sovereignty, the state is their agent

Dario is a con man. Anyone suggesting he’s a billionaire with a heart of gold is just plain silly.

Anthropic was spun out of Open ai. Which was spun out of Lawrence labs BAIR program and Google Brain.

Amazon, Google, and Microsoft own both open ai and Anthropic. This is so basic it’s silly anyone can fall for the theater.

It reminds me of zuck cage fighting fatboy Musk. How many of you fall for this WWF reality show?